Predictive Modeling in higher education hinges on the careful consideration of your training data. We’ll discuss a handful in this chapter to get you out the door and on your way to explore essential elements like data and variable selection, emphasizing the balance between statistical rigor and contextual understanding.

We’ll discuss how overfitting can undermine predictive accuracy and highlight techniques to select the most impactful variables, especially in context around your institution, students, and external factors happening in the orbit of college-going decisions. Additionally, we’ll address the challenges of missing data and provide strategies to ensure your models effectively capture relevant patterns and make reliable future predictions.

Variable Selection

As a piece of wisdom from your friendly neighborhood Slater. The more times models are run and the more variables are added, the more likely it is to stumble upon random effects and correlations that won’t be valuable to use in the future. A similar principle in statistics is when using multivariate analysis, to adjust tests to be more conservative in finding significant results and that’s just a handful of tests. If a model is run 100 times with different variables, nothing is adjusting for those random effects. Be mindful and don’t just go fishing for factors. After all, a p value of .05 (a standard rule of thumb) indicates that even if there is no relationship, if you ran your test 100 times, you’d still expect to get results like this 5 times. How many ways did you try to put together the puzzle that is your model(s)?

All of that requires data to begin with. Literature, observations, and correlated variables may help start you down the selection process.

Univariate Analysis: Check correlations between possible predictors and the outcome for initial insight, but complement this with more robust checks.

**Use p-values: For each variable, test the significance of its relationship with the outcome (e.g., using chi-square tests for categorical variables or t-tests for continuous variables). This adds a statistical layer to your correlation-based decision.

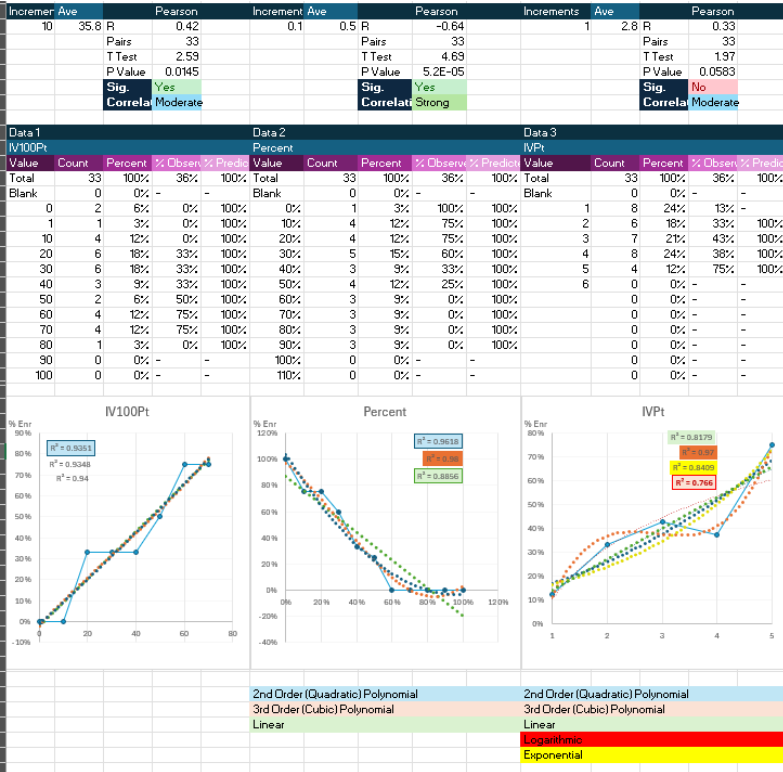

Plotting out the relationship your variable has with the outcome also allows you to have a visual of the correlation. Excel allows you to add and test the fit (linear, quadratic, etc.). Excel also allows you to quickly and easily filter or build separate tabs for certain years and populations to identify trends and multiple Models that are required to accurately predict the outcome.

Consider Variable Importance Techniques:

- Recursive Feature Elimination (RFE): This helps determine which variables contribute most to model performance.

- Lasso Regression: Lasso can help with variable selection by penalizing the regression model for including too many variables and shrinking some coefficients to zero.

Stepwise Regression: Use forward selection, backward elimination, or stepwise selection to iteratively build your model by adding or removing variables based on their statistical significance or improvement in model fit (e.g., AIC/BIC criteria).

Multivariate Impact: While correlation with the outcome is useful, always consider how variables perform when combined. A variable with weak correlation on its own might become highly valuable when other variables are included in the model.

Domain Knowledge: Sometimes, variables that don’t seem strongly correlated with the outcome might be conceptually important based on domain knowledge (e.g., demographic factors that influence admissions behavior), and could be considered despite weak correlation in the existing training data.

Feature Engineering: Some variables may not themselves be strong predictors of the outcome, but when viewed in the context or relationship of another variable, they become a crucial data point. Perhaps the date the student applied does not add to your model, but what if they applied right after visiting campus or signed up for a visit the day they received the Admit/Aid Award/Transfer Evaluation?

Training Data

As you begin exploring your data, there are some considerations that you should keep in mind.

Historical Data: 3-5 years of data is often a sweet spot for many Predictive Models in admissions. This provides enough history to capture trends and variation while remaining recent enough to reflect current behaviors. However, always consider factors like stability of patterns, external changes, and data volume specific to your institution.

“Stability” is a stretch at the time of writing this book. Between the differences in offerings, timing, and student situations around the COVID 19 Pandemic, FAFSA “Simplification”, Demographics and Search Cliffs, questions of the value and Values of higher education, and alternatives to the traditional college experience, any given year might have trouble generalizing and predicting future years.

1. Consistency of Patterns

- Stability Over Time: If the application and enrollment patterns at your institution have remained stable (e.g., admissions policies, marketing strategies, or external factors like the economy haven’t drastically changed), using more years of data (~5 years) can be beneficial. This provides a more comprehensive understanding of typical behaviors and reduces the influence of outlier years.

- Changing Behavior: If there have been significant changes in recruitment strategies, admissions policies, external factors (e.g., pandemic), or shifts in the student population, you may want to focus on more recent data (~2 years). Older data could introduce bias because it might not reflect current behaviors or trends.

2. Volume of Data

- Low Volume of Applicants: If you have fewer applicants and enrollees each year, using more years of data (up to 5-7 years) may be necessary to ensure that your models are trained on a sufficient number of data points. Binary logistic regression models need enough events to make stable predictions.

- High Volume of Applicants: If you have a large number of applications or enrollments per year, you can more easily afford to use fewer years of data (e.g., 2-3 years) since each year provides more robust data for training. A common rule of thumb is 10 events per predictor, but some research I have seen recommends up to 50 events. In the case of enrollment Modeling, if you have a 20% yield rate, you would want 10 enrolling students (the least common event) per predictor – 500 admits, 20% (100) enrolling with 10 predictors, so 10 enrolling students per predictor.

3. Changing External Conditions

- External Trends: Consider external factors such as economic shifts, demographic changes, or political policies that may impact application and enrollment decisions. If recent trends are particularly relevant (e.g., post-pandemic admissions behavior), you may want to focus on the last 2-3 years of data to reflect current realities.

- Institutional Changes: If your institution has made major changes (e.g., changed admissions requirements, introduced new programs, or revised financial aid policies), it’s important to use data from after those changes took effect. Including data from before these changes could skew your model.

4.Data Recency and Predictive Power

- Recency of Data: More recent data tends to have greater predictive power when forecasting short-term outcomes like likelihood to apply or enroll. Applicant behavior and enrollment decisions can be influenced by shifting social, political, or economic conditions, so older data may not be as predictive for future admissions cycles.

- Trade-off: There’s a balance between including enough historical data to capture reliable patterns and ensuring the data is recent enough to reflect current behavior. Typically, 3-5 years is a good balance, depending on the stability of your institution’s admissions trends.

5. Model Complexity and Overfitting

- Overfitting Risk: Using too many years of data can lead to overfitting, where the model learns idiosyncratic details that apply only to past data but not to future applicants. This is especially true if there have been significant changes over time.

- Underfitting Risk: On the other hand, using too few years may lead to underfitting, where the model does not capture important longer-term trends and variabilities in the data.

6. Cross-Validation and Backtesting

- Cross-Validation: You can use cross-validation (e.g., k-fold) to test how your models perform using different subsets of your data, ensuring the model generalizes well across different years.

- Backtesting: Simulate predictions by using older years of data to predict subsequent years. For example, train on data from 2018-2020 and predict behavior in 2021. This helps assess whether including more years of data improves predictive accuracy.

7. Practical Considerations

- Availability of Data: Use as many years of data as are available and relevant. If historical data is incomplete, has changed formats, or lacks critical variables, it might not be worth including.

- Data Granularity: If you have very detailed data on applicant behavior (e.g., Engagement Scores, web interactions, etc.), fewer years might still provide enough richness for the model to learn from.

Missing Data:

Depending on the variables in the model, there may also be some missing data – GPA, changes in behavior opportunities (virtual/in person events during the pandemic, new forms, etc.), and other issues. Binary logistic regression will exclude those records with missing data from the training data. And applying the model to current records missing data (multiplying by 0 essentially) could drastically change student probabilities when a missing data strategy could give you much more accurate predictions.

There are a few plans. Maybe not fun or fast, but there are plans. Investigation as to why the data is missing should always be performed. If a process is broken, incomplete, or missing an element that will bring in the data, that should be updated. But if data is truly missing, then let’s look at some of those plans –

- Exclusion: Exclude records with missing data from your analysis/future records being predicted on. This is fast and tempting, but as with many fast and tempting options, probably won’t serve the institution best. Students with missing data are likely an important element of your population and training data, so excluding them will harm your model and its generalizability. And excluding/mispredicting live records again will harm any predictions you’re trying to make, possibly to the point where the model may do more harm than good.

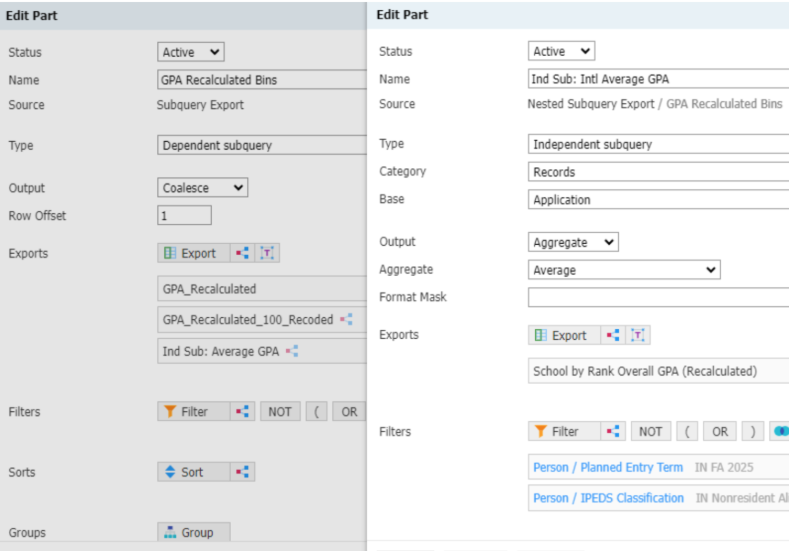

- Replaced Values: Replacing null values for variables like missing GPA with a static value or population average would preserve these records for use in the training data and allow the model automation to work on the record. Coalescing (creating a series of variables that will be used if the previous is missing/null) this data in a Slate export is still within our Quickster’s fast and easy super powers to do in the Automation Queries or in a Rule/separate Query writing to a Field if the computational power can’t be spared in the Automation Query

- Predictive Values: Creating additional regressions to predict the null values based on other data and engagement behaviors. These imputational regressions can again be run in the Slate queries or in separate Rules/Queries to write to a field specifically designed to hold imputed variables. You don’t want to report predicted GPA just because you put it in the standard field for use in Predictive Modeling.

While regression-based imputation is the most time-consuming option, that is a much better way to balance your model and account for the inconsistencies in your data, especially if you are including Pandemic-era data in your model where you’re likely to have a broad range of expected values that are very nuanced.

Think predicting in-person attendance for students whose recruitment cycle happened when there were no (or limited) in-person events. If you include event attendance in your model, these people will be coded as 0 (or null depending on your query). In your training data, that will pull your effect size for attendance towards 0.

Some of these people would have visited your campus if they had the chance. So if you use a regression based on other Slate data to predict attendance, then you’ll more accurately build your main outcome model. Additional touches like Stochastic Regression Imputation (adding random error from the residuals of the model) and Predictive Mean Matching (using observed values to replace missing data from records with similar predicted means) may increase data quality but are more time consuming and would be harder to program into Slate to run automatically.

The lower entry plans would include replacing the missing datapoints with an average of the remaining population or some otherwise determined static value. These solutions would not require running additional models in SPSS. In fact, they can be calculated in Slate, even tailoring the averages live with independent subqueries. But this plan is far hollower than the imputational regressions, which will likely give you more accurate predictions. There are other methods, but these are a good starting point with a broad level of intensity.

Conclusion

Effective Predictive Modeling requires a nuanced approach to data selection and variable importance, grounded in both statistical methods and domain knowledge. By being mindful of overfitting and avoiding the temptation to go fishing for correlations, you can enhance the Model’s predictive power. Addressing issues like missing data thoughtfully ensures broader applicability. These strategies will help you build robust Models that accurately reflect your institution’s unique context and adapt to the evolving landscape of higher education.

Interested in learning more? In the rapidly evolving landscape of higher education, institutions are increasingly turning to data-driven strategies to enhance recruitment, enrollment, and student success. The Innovation Forge is a comprehensive and practical guide that empowers admissions professionals, enrollment managers, and data enthusiasts to leverage Engagement Scoring, Predictive Modeling, and Adaptive Enrollment Management for strategic decision-making and crafting artisanal student experiences.